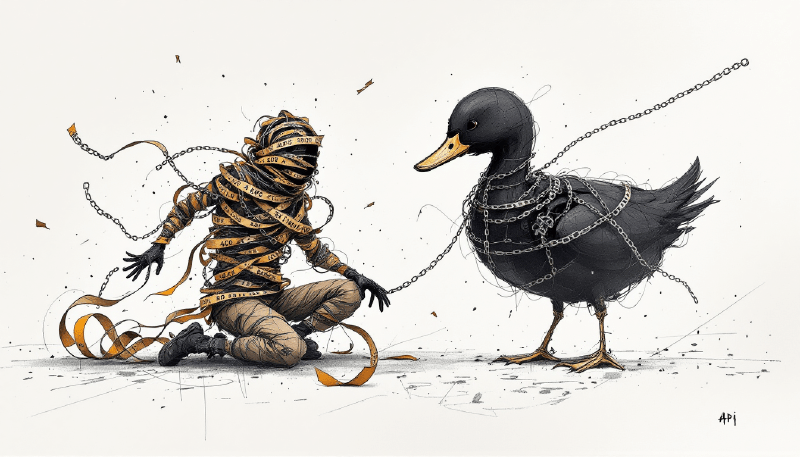

The first time I opened the BlackDuck API documentation, I thought building a vulnerability collector would take a couple weeks. Fetch some projects, grab their vulnerabilities, write them to a database. How hard could it be?

Famous last words.

The initial build did take a couple weeks. But then came the months of discovering that every assumption I’d made was slightly wrong — undocumented Accept headers, pagination quirks, URL patterns that contradict each other, and the slow realization that the API’s real complexity isn’t technical at all. It’s organizational. I’ve been refining and adding to this collector ever since, and at this point I’m not sure if I’m building a tool or if the BlackDuck API is building my character.

This is everything I wish someone had told me before I started.

Everything Is a Hierarchy, and You Can’t Skip Levels

BlackDuck organizes data in a strict hierarchy that mirrors how software projects are structured:

Projects

└── Versions

└── Components

├── Origins

│ └── Upgrade Guidance

│ └── Dependency Paths

└── Vulnerabilities

This seems obvious when you see it laid out, but the implication caught me off guard: you cannot skip levels. Want vulnerabilities for a component? You need to know the project first, then the version, then navigate to the component. There’s no “give me all vulnerabilities across everything” endpoint. This hierarchy drives every single API call you’ll make, and it means your collector needs to walk the tree — every time.

The Token Exchange Dance

BlackDuck uses a two-step authentication process that tripped me up on day one. You have an API token — a long-lived credential — but you can’t use it directly for API calls. Instead, you exchange it for a short-lived bearer token:

POST /api/tokens/authenticate

Authorization: token {your-api-token}

The response contains your bearer token and its expiration:

{

"bearerToken": "eyJhbGciOiJIUzI1NiIs...",

"expiresInMilliseconds": 7200000

}

Here’s what bit me: bearer tokens typically expire in 2 hours (7200000ms). That sounds generous until you’re processing thousands of projects and your token expires mid-collection. I learned this the hard way — a 3-hour run that crashed at the 2-hour mark with no recovery. Now I build in a 1-minute buffer and refresh proactively before expiration. Nothing kills a long-running collection like an expired token mid-process.

# Calculate expiration with a 1-minute safety buffer

token_expires = datetime.utcnow() + timedelta(

milliseconds=expires_in_ms - 60000

)

The Accept Header Maze That Almost Broke Me

With most APIs, I can skim the auth docs, find the endpoints I need, and start building. BlackDuck doesn’t work that way. It expects you to read the documentation for every single endpoint just to figure out the correct Accept header — and even then, the docs don’t always explain why a particular header matters or what happens if you get it wrong. Spoiler: what happens is a 406 Not Acceptable with zero useful context about what you should have sent instead.

The root of the problem is versioned media types. Different endpoints require different Accept headers, and there’s no obvious pattern to predict which one you need.

Here’s the mapping I discovered through trial and error — and I do mean trial and error, because the documentation is incomplete at best:

| Endpoint Type | Accept Header |

|---|---|

| Projects | application/vnd.blackducksoftware.project-detail-7+json |

| Versions | application/vnd.blackducksoftware.project-detail-5+json |

| Components | application/vnd.blackducksoftware.bill-of-materials-6+json |

| Vulnerabilities | application/vnd.blackducksoftware.vulnerability-4+json |

| Upgrade Guidance | application/vnd.blackducksoftware.component-detail-5+json |

| Policy Rules | application/vnd.blackducksoftware.policy-5+json |

| Licenses | application/vnd.blackducksoftware.component-detail-5+json |

Here’s a trick that saved me hours of debugging: if you get a 406 error, try the request again without the Accept header. Some endpoints are more forgiving than their documentation suggests. I can’t explain why this works. It just does.

Walking the API: Endpoint by Endpoint

Let me walk through each level of the hierarchy, because the details matter and the docs don’t tell the full story.

Projects

GET /api/projects/{project-id}

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.project-detail-7+json

Example Response:

{

"name": "payment-service",

"description": "Core payment processing microservice",

"createdAt": "2024-03-15T10:30:00.000Z",

"createdBy": "svc-scanner",

"updatedAt": "2025-10-20T14:22:00.000Z",

"projectGroup": "https://blackduck.example.com/api/project-groups/a1b2c3d4-5678-90ab-cdef-111111111111",

"projectTier": 1,

"customSignatureEnabled": false,

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479",

"links": [

{

"rel": "versions",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions"

},

{

"rel": "users",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/users"

}

]

}

}

Projects are the top-level containers. One thing I want to call out: see that _meta.href field? That’s the canonical URL for this resource. Always extract IDs from these URLs, not from elsewhere in the response. I made the mistake of trying to build URLs manually early on, and it broke in subtle ways when project groups were involved.

Project Hierarchies

If a project has a projectGroup, you can fetch its organizational hierarchy — this turned out to be more useful than I initially expected for reporting:

GET /api/project-groups/{group-id}/hierarchy

Authorization: Bearer {token}

Example Response:

{

"name": "Engineering",

"description": "All engineering projects",

"children": [

{

"name": "Platform Team",

"children": [

{

"name": "payment-service",

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479"

}

},

{

"name": "user-service",

"_meta": {

"href": "https://blackduck.example.com/api/projects/a1b2c3d4-5678-90ab-cdef-222222222222"

}

}

],

"_meta": {

"href": "https://blackduck.example.com/api/project-groups/b2c3d4e5-6789-01ab-cdef-333333333333"

}

}

],

"_meta": {

"href": "https://blackduck.example.com/api/project-groups/a1b2c3d4-5678-90ab-cdef-111111111111/hierarchy"

}

}

This nested structure helps you understand organizational ownership. When leadership asks “how many critical vulns does the Platform team have?” — this is how you answer that.

Versions

GET /api/projects/{project-id}/versions

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.project-detail-5+json

Example Response:

{

"totalCount": 3,

"items": [

{

"versionName": "main",

"phase": "RELEASED",

"distribution": "INTERNAL",

"createdAt": "2024-03-15T10:35:00.000Z",

"createdBy": "svc-scanner",

"settingUpdatedAt": "2025-10-20T14:22:00.000Z",

"source": "CUSTOM",

"securityRiskProfile": {

"counts": [

{"countType": "CRITICAL", "count": 2},

{"countType": "HIGH", "count": 8},

{"countType": "MEDIUM", "count": 15},

{"countType": "LOW", "count": 23}

]

},

"licenseRiskProfile": {

"counts": [

{"countType": "HIGH", "count": 1},

{"countType": "MEDIUM", "count": 3},

{"countType": "LOW", "count": 45}

]

},

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888",

"links": [

{

"rel": "components",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components"

}

]

}

},

{

"versionName": "develop",

"phase": "DEVELOPMENT",

"distribution": "INTERNAL",

"createdAt": "2024-06-01T08:00:00.000Z",

"settingUpdatedAt": "2025-10-18T09:15:00.000Z",

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/d7c6b5a4-3928-1c0d-9e8f-777777777777"

}

}

],

"_meta": {

"totalCount": 3,

"links": []

}

}

Versions represent different states of your project — branches, releases, tags. The key fields to pay attention to:

versionName: The human-readable name (e.g., “main”, “v2.0.0”)settingUpdatedAt/updatedAt: Timestamps for finding the “latest” version_meta.href: The canonical URL for this version

One of the initial hurdles I ran into was that teams across the org had wildly different version structures. Some had several versions all with semantic version numbers. Others just called theirs develop or main — or something even less descriptive. In the absence of other data, one approach to picking the “right” version is comparing settingUpdatedAt (falling back to updatedAt) timestamps. It’s not surefire, but it might be the right solution — until one of those other nondescript versions gets a scan triggered and suddenly none of your resource IDs match. That’s when you watch a massive wave of tickets get closed and new ones get opened, and you get to explain to engineering why their backlog just reshuffled overnight. I’ll dig deeper into the version naming chaos later — it’s a bigger problem than it sounds.

Components: The Bill of Materials

GET /api/projects/{project-id}/versions/{version-id}/components?limit=1000

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.bill-of-materials-6+json

Example Response:

{

"totalCount": 247,

"items": [

{

"componentName": "lodash",

"componentVersionName": "4.17.15",

"component": "https://blackduck.example.com/api/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa",

"componentVersion": "https://blackduck.example.com/api/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/versions/v1v2v3v4-5678-90ab-cdef-bbbbbbbbbbbb",

"usages": ["DYNAMICALLY_LINKED"],

"matchTypes": ["FILE_EXACT"],

"reviewStatus": "NOT_REVIEWED",

"policyStatus": "IN_VIOLATION",

"securityRiskProfile": {

"counts": [

{"countType": "CRITICAL", "count": 1},

{"countType": "HIGH", "count": 2},

{"countType": "MEDIUM", "count": 0},

{"countType": "LOW", "count": 1}

]

},

"origins": [

{

"name": "npmjs",

"externalNamespace": "npmjs",

"externalId": "lodash/4.17.15",

"origin": "https://blackduck.example.com/api/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/versions/v1v2v3v4-5678-90ab-cdef-bbbbbbbbbbbb/origins/o1o2o3o4-5678-90ab-cdef-cccccccccccc",

"_meta": {

"links": [

{

"rel": "upgrade-guidance",

"href": "https://blackduck.example.com/api/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/versions/v1v2v3v4-5678-90ab-cdef-bbbbbbbbbbbb/origins/o1o2o3o4-5678-90ab-cdef-cccccccccccc/upgrade-guidance"

}

]

}

}

],

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa",

"links": [

{

"rel": "vulnerabilities",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/vulnerabilities"

},

{

"rel": "comments",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/comments"

}

]

}

}

],

"_meta": {

"totalCount": 247,

"links": [

{

"rel": "paging-next",

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components?offset=1000&limit=1000"

}

]

}

}

Components are the dependencies in your project. Each one contains:

componentName/componentVersionName: Human-readable identifierscomponent/componentVersion: URLs to the component definitionsorigins[]: Array of source information (npm, maven, pypi, etc.)_meta.links[]: Navigation links to related resources

One thing that surprised me: the origins[] array is embedded in the component response — you don’t need a separate API call to get it. Small mercy.

Why I Was Wrong to Ignore Origins

At first glance, origins seem like metadata you can skip — “lodash is lodash, right?” I was wrong. Origins turned out to be essential, and ignoring them early on cost me a rewrite.

1. The Same Component Can Have Multiple Origins

A single component in your BOM might appear via different package ecosystems:

{

"componentName": "guava",

"componentVersionName": "31.1-jre",

"origins": [

{

"name": "maven",

"externalNamespace": "com.google.guava",

"externalId": "com.google.guava:guava:31.1-jre"

},

{

"name": "gradlePluginPortal",

"externalNamespace": "com.google.guava",

"externalId": "com.google.guava:guava:31.1-jre"

}

]

}

Why would this happen? Different build tools, different resolution mechanisms, or the same library declared in multiple places. Each origin may have different metadata and different links.

2. Upgrade Guidance Lives on Origins, Not Components

This one burned me. The upgrade-guidance link is attached to origins, not to components directly. If you skip origins, you can’t get remediation recommendations:

Component

└── Origin (has upgrade-guidance link) ✓

└── Upgrade Guidance

vs.

Component (no upgrade-guidance link) ✗

This makes sense when you think about it — upgrading via npm is different from upgrading via yarn, even for the same package. But the API doesn’t exactly advertise this distinction.

3. Dependency Paths Require Origin IDs

To understand transitive dependency relationships, you need the origin ID:

GET /api/project/{project-id}/version/{version-id}/origin/{origin-id}/dependency-paths

Without the origin, you can’t trace how a vulnerable component entered your dependency tree.

4. Origins Identify the Package Ecosystem

The origin’s externalNamespace and externalId tell you exactly how to reference this dependency in your build files:

| Origin | externalId | How to Fix |

|---|---|---|

| npmjs | lodash/4.17.15 | Update package.json |

| maven | org.apache.logging.log4j:log4j-core:2.14.1 | Update pom.xml |

| pypi | requests/2.25.0 | Update requirements.txt |

| nuget | Newtonsoft.Json/12.0.3 | Update .csproj |

Your ticketing system should include this information so engineers know exactly where to make the change. I can’t tell you how many times an engineer came back to me asking “okay, but where is this dependency?” Include the ecosystem. Always.

5. Different Origins Can Have Different Vulnerability Exposure

In rare cases, the same component version from different origins might have different vulnerability profiles. This can happen with:

- Repackaged components (someone republishes a library with modifications)

- Internal registries that mirror public packages with delays

- Platform-specific builds that include different native dependencies

6. Origins Are Required for License Analysis

License information is often tied to the origin, not just the component. The same code published to npm vs. a private registry might have different license declarations.

The Practical Implication:

When processing components, always iterate through origins:

for component in components:

for origin in component.get("origins", []):

origin_id = extract_id_from_url(origin["origin"])

# Get upgrade guidance for THIS origin

upgrade_guidance = await fetch_upgrade_guidance(origin)

# Get dependency paths for THIS origin

dep_paths = await fetch_dependency_paths(origin_id)

# Include origin info in your findings

finding = {

"component": component["componentName"],

"version": component["componentVersionName"],

"origin_ecosystem": origin.get("externalNamespace"),

"origin_id": origin.get("externalId"),

"upgrade_guidance": upgrade_guidance,

"dependency_paths": dep_paths

}

Don’t assume one origin per component. Process each origin separately. I learned this the hard way.

Vulnerabilities

Vulnerabilities are accessed via links discovered in component responses — this is the HATEOAS pattern that BlackDuck uses throughout:

# Find vulnerabilities link in component._meta.links[]

for link in component["_meta"]["links"]:

if link["rel"] == "vulnerabilities":

vuln_url = link["href"]

break

GET {vulnerabilities-url}

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.vulnerability-4+json

Example Response:

{

"totalCount": 4,

"items": [

{

"vulnerabilityName": "CVE-2021-23337",

"description": "Lodash versions prior to 4.17.21 are vulnerable to Command Injection via the template function.",

"vulnerabilityPublishedDate": "2021-02-15T13:15:00.000Z",

"vulnerabilityUpdatedDate": "2023-06-12T08:30:00.000Z",

"baseScore": 7.2,

"overallScore": 7.2,

"exploitabilitySubscore": 1.8,

"impactSubscore": 5.9,

"severity": "HIGH",

"source": "NVD",

"remediationStatus": "NEW",

"cweId": "CWE-94",

"relatedVulnerability": "https://blackduck.example.com/api/vulnerabilities/CVE-2021-23337",

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/vulnerabilities/CVE-2021-23337",

"links": [

{

"rel": "related-vulnerability",

"href": "https://blackduck.example.com/api/vulnerabilities/CVE-2021-23337"

}

]

}

},

{

"vulnerabilityName": "CVE-2020-8203",

"description": "Prototype pollution attack in lodash before 4.17.19.",

"vulnerabilityPublishedDate": "2020-07-15T17:15:00.000Z",

"baseScore": 7.4,

"severity": "HIGH",

"source": "NVD",

"remediationStatus": "NEW",

"cweId": "CWE-1321",

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/vulnerabilities/CVE-2020-8203"

}

}

],

"_meta": {

"totalCount": 4,

"links": []

}

}

This pattern of discovering URLs through _meta.links is fundamental to BlackDuck’s design. Fight the urge to hardcode URL patterns. Follow the links.

Upgrade Guidance

Found via the origin’s _meta.links:

GET /api/components/{comp-id}/versions/{ver-id}/origins/{origin-id}/upgrade-guidance

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.component-detail-5+json

Returns both short-term and long-term upgrade recommendations:

{

"shortTermUpgrade": {

"componentName": "lodash",

"versionName": "4.17.21",

"vulnerabilityCount": 0

},

"longTermUpgrade": {

"componentName": "lodash",

"versionName": "5.0.0"

}

}

This is one of the most underrated features of the API. Instead of just saying “you have a vulnerability,” it tells you exactly where to go. Short-term gives you the closest safe version; long-term gives you the ideal target.

Dependency Paths: The Hidden Gem

Dependency paths let you trace how a vulnerable component actually entered your project — which matters a lot when the vulnerability is in something your team didn’t directly pull in:

GET /api/project/{project-id}/version/{version-id}/origin/{origin-id}/dependency-paths

Authorization: Bearer {token}

Example Response:

{

"totalCount": 1,

"items": [

{

"path": [

{

"componentName": "lodash",

"componentVersionName": "4.17.15",

"externalId": "lodash/4.17.15",

"_meta": {

"href": "https://blackduck.example.com/api/components/c1d2e3f4-5678-90ab-cdef-aaaaaaaaaaaa/versions/v1v2v3v4-5678-90ab-cdef-bbbbbbbbbbbb"

}

},

{

"componentName": "async",

"componentVersionName": "3.2.0",

"externalId": "async/3.2.0",

"_meta": {

"href": "https://blackduck.example.com/api/components/d2e3f4a5-6789-01bc-defg-dddddddddddd/versions/w2x3y4z5-6789-01bc-defg-eeeeeeeeeeee",

"links": [

{

"rel": "transitive-upgrade-guidance",

"href": "https://blackduck.example.com/api/components/d2e3f4a5-6789-01bc-defg-dddddddddddd/versions/w2x3y4z5-6789-01bc-defg-eeeeeeeeeeee/transitive-upgrade-guidance"

}

]

}

},

{

"componentName": "payment-service",

"componentVersionName": "main",

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888"

}

}

]

}

],

"_meta": {

"totalCount": 1,

"links": []

}

}

The path array reads from vulnerable component to direct dependency to your project. In this example, lodash (vulnerable) is pulled in by async (direct dependency), which is a dependency of payment-service.

Watch out for the URL pattern here — this endpoint uses singular project and version instead of the plural projects and versions you see everywhere else. This isn’t a typo. It’s a different URL pattern. I spent an embarrassing amount of time debugging 404s before I noticed.

Direct vs. Transitive: The Distinction That Changes Everything

Here’s where the real insight lives. The upgrade-guidance endpoint works great for direct dependencies — components your project explicitly declares. But what about transitive dependencies — libraries pulled in by your dependencies?

If lodash@4.17.15 has a vulnerability, but your project doesn’t directly depend on lodash — it comes in via async@3.2.0 — telling an engineer to “upgrade lodash” is useless. They can’t directly control that dependency. I’ve seen this create more frustration between security and engineering teams than almost anything else.

BlackDuck provides transitive-upgrade-guidance that answers the right question: “What version of the direct dependency should I upgrade to in order to get a fixed version of the transitive dependency?”

Here’s the workflow:

1. You find a vulnerable component (lodash@4.17.15)

2. You check: is this a direct or transitive dependency?

3. If transitive, fetch dependency-paths to find the direct dependency (async@3.2.0)

4. Look for "transitive-upgrade-guidance" link on that direct dependency

5. Fetch the guidance to learn: "Upgrade async to 3.2.4 to get lodash 4.17.21"

Fetching Transitive Upgrade Guidance:

The transitive-upgrade-guidance link appears on the direct dependency node in the dependency path:

GET /api/components/{direct-dep-id}/versions/{direct-dep-ver-id}/transitive-upgrade-guidance

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.component-detail-5+json

Example Response:

{

"component": "async",

"versionName": "3.2.0",

"shortTermUpgradeGuidance": {

"versionName": "3.2.4",

"vulnerabilityCount": 0,

"transitiveUpgrades": [

{

"componentName": "lodash",

"fromVersion": "4.17.15",

"toVersion": "4.17.21",

"vulnerabilitiesResolved": 4

}

]

},

"longTermUpgradeGuidance": {

"versionName": "4.0.0",

"vulnerabilityCount": 0

}

}

That’s the shift. Your ticket to the engineer should say “Upgrade async from 3.2.0 to 3.2.4” rather than “Fix lodash vulnerability.” The engineer controls async; they don’t control lodash. This distinction is the difference between an actionable ticket and a frustrating one.

Implementation Pattern:

# When processing a component with vulnerabilities

if is_transitive(component):

# Get the dependency path

path_data = await fetch_dependency_paths(origin_id)

# Find the direct dependency (second-to-last in path, before the project)

path = path_data["items"][0]["path"]

direct_dep = path[-2] # Last is project, second-to-last is direct dep

# Look for transitive-upgrade-guidance link

for link in direct_dep["_meta"]["links"]:

if link["rel"] == "transitive-upgrade-guidance":

guidance = await fetch(link["href"])

# Now you have actionable guidance for the engineer

else:

# Direct dependency - use regular upgrade-guidance

guidance = await fetch_upgrade_guidance(origin_id)

Don’t Create Tickets Engineers Can’t Act On

Here’s an even more frustrating scenario I ran into: a transitive dependency has a vulnerability, but the direct dependency hasn’t released a version that fixes it. Creating a ticket in this case is worse than useless — it’s demoralizing. It erodes trust between security and engineering.

Vulnerability: CVE-2024-9999 in vulnerable-lib@1.0.0

Dependency path: vulnerable-lib@1.0.0 → parent-lib@2.3.0 → your-project

Transitive upgrade guidance for parent-lib@2.3.0:

{

"shortTermUpgradeGuidance": null, // No fix available!

"longTermUpgradeGuidance": null

}

The engineer receives a ticket saying “Fix CVE-2024-9999” but there is literally nothing they can do. The maintainer of parent-lib hasn’t updated their dependency on vulnerable-lib. The only options are:

- Wait (not actionable)

- Fork

parent-liband fix it yourself (rarely practical) - Remove

parent-libentirely (often not feasible) - Accept the risk (not the engineer’s decision)

None of these belong in an engineer’s sprint backlog.

Our Product Security team is very intentional about not giving engineering teams work they can’t act on — we have street cred to maintain, and nothing burns trust faster than unactionable tickets clogging a backlog. So the filtering logic became essential:

def should_create_ticket(vuln, dependency_path, transitive_guidance):

"""

Determine if a vulnerability should result in an engineer ticket.

Returns:

(should_create: bool, reason: str, alternative_action: str)

"""

# Direct dependency - engineer can always act

if is_direct_dependency(vuln):

if has_upgrade_available(vuln):

return (True, "direct_with_fix", None)

else:

# Even direct deps might not have fixes yet

return (False, "direct_no_fix", "watch_for_release")

# Transitive dependency - check if upgrade path exists

if transitive_guidance is None:

return (False, "transitive_no_guidance", "monitor")

short_term = transitive_guidance.get("shortTermUpgradeGuidance")

long_term = transitive_guidance.get("longTermUpgradeGuidance")

if short_term and short_term.get("versionName"):

return (True, "transitive_with_fix", None)

if long_term and long_term.get("versionName"):

# Long-term fix exists but might be a major version bump

return (True, "transitive_major_upgrade_needed", None)

# No upgrade path available

return (False, "transitive_no_fix_available", "escalate_to_security")

# Usage in your pipeline:

for vuln in vulnerabilities:

guidance = get_transitive_guidance(vuln)

should_create, reason, alternative = should_create_ticket(vuln, path, guidance)

if should_create:

create_jira_ticket(vuln, guidance)

else:

# Track differently - don't burden engineers

log_unactionable_finding(vuln, reason)

if alternative == "escalate_to_security":

add_to_security_team_watchlist(vuln)

elif alternative == "watch_for_release":

add_to_release_monitor(vuln)

What To Do Instead of Creating Tickets:

| Situation | Alternative Action |

|---|---|

| No fix in any version of direct dep | Add to security team watchlist; monitor for upstream fix |

| Fix only in major version (breaking change) | Create planning ticket for tech debt/migration, not sprint work |

| Vulnerability is low severity + no fix | Document accepted risk; revisit quarterly |

| Direct dep is abandoned/unmaintained | Evaluate alternatives; create migration epic |

Separate Tracking for Unactionable Findings:

# Don't pollute your "open vulnerabilities" metric with things engineers can't fix

actionable_findings = [] # → Jira tickets for engineers

unactionable_findings = [] # → Security team dashboard

for vuln in all_vulnerabilities:

if can_engineer_fix(vuln):

actionable_findings.append(vuln)

else:

unactionable_findings.append({

**vuln,

"blocked_reason": "no_upstream_fix",

"upstream_package": get_blocking_dependency(vuln),

"monitoring_since": datetime.now(),

"last_checked": datetime.now()

})

# Security team reviews unactionable_findings weekly

# When upstream releases fix, move to actionable_findings

Your metrics should tell this story clearly:

Vulnerability Summary for payment-service:

├── Actionable (in engineering backlog): 12

│ ├── Critical: 2

│ ├── High: 5

│ └── Medium: 5

├── Awaiting Upstream Fix: 8

│ ├── No direct dep upgrade available: 5

│ └── Requires major version migration: 3

└── Accepted Risk: 3

└── Low severity, no fix, documented exception

Total known vulnerabilities: 23

Engineer-actionable: 12 (52%)

This gives leadership accurate visibility while ensuring engineers only see work they can actually complete. When I started presenting metrics this way, the conversations with engineering leadership got dramatically more productive.

15 Vulns, 3 Tickets: Grouping by Remediation Action

Here’s a scenario I kept running into: a project has 15 vulnerable transitive dependencies, but they all trace back through just 3 direct dependencies. Creating 15 tickets is noise — you really have 3 remediation actions. Does the noise help anyone? It doesn’t.

The grouping strategy uses dependency path data to cluster vulnerabilities by the direct dependency that needs to be upgraded:

Raw findings from BlackDuck:

├── CVE-2021-23337 in lodash@4.17.15

├── CVE-2020-8203 in lodash@4.17.15

├── CVE-2021-44228 in log4j-core@2.14.1

├── CVE-2021-45046 in log4j-core@2.14.1

├── CVE-2021-45105 in log4j-core@2.14.1

├── CVE-2022-31692 in spring-security@5.6.0

└── CVE-2023-12345 in commons-text@1.9

After fetching dependency paths:

├── lodash@4.17.15 → pulled in by async@3.2.0

├── log4j-core@2.14.1 → pulled in by spring-boot-starter@2.6.0

├── spring-security@5.6.0 → pulled in by spring-boot-starter@2.6.0

└── commons-text@1.9 → pulled in by async@3.2.0

Grouped by remediation action:

├── Upgrade async@3.2.0 → 3.2.4

│ └── Fixes: CVE-2021-23337, CVE-2020-8203, CVE-2023-12345

└── Upgrade spring-boot-starter@2.6.0 → 2.7.8

└── Fixes: CVE-2021-44228, CVE-2021-45046, CVE-2021-45105, CVE-2022-31692

Result: 7 CVEs become 2 tickets. That’s the kind of signal-to-noise ratio that makes engineers trust your process.

Implementation:

from collections import defaultdict

def group_by_remediation(vulnerabilities, dependency_paths):

"""

Group vulnerabilities by the direct dependency that needs upgrading.

Returns a dict: {direct_dep_key: [list of vulns fixed by upgrading it]}

"""

remediation_groups = defaultdict(lambda: {

"direct_dependency": None,

"upgrade_to": None,

"vulnerabilities": [],

"transitive_components": set()

})

for vuln in vulnerabilities:

component_id = vuln["_context"]["component_id"]

origin_id = vuln["_context"].get("origin_id")

# Find the dependency path for this component

path_info = dependency_paths.get(origin_id)

if not path_info:

# Direct dependency - group by itself

key = component_id

remediation_groups[key]["direct_dependency"] = vuln["componentName"]

remediation_groups[key]["vulnerabilities"].append(vuln)

continue

# Transitive dependency - group by the direct dependency

path = path_info["items"][0]["path"]

# Path structure: [vulnerable_comp, ..., direct_dep, project]

# Direct dependency is second-to-last

if len(path) >= 2:

direct_dep = path[-2]

direct_dep_name = direct_dep["componentName"]

direct_dep_version = direct_dep["componentVersionName"]

key = f"{direct_dep_name}@{direct_dep_version}"

remediation_groups[key]["direct_dependency"] = direct_dep_name

remediation_groups[key]["current_version"] = direct_dep_version

remediation_groups[key]["vulnerabilities"].append(vuln)

remediation_groups[key]["transitive_components"].add(

f"{vuln['componentName']}@{vuln['componentVersionName']}"

)

# If we have transitive upgrade guidance, capture the target version

if "_transitive_upgrade_guidance" in path_info:

guidance = path_info["_transitive_upgrade_guidance"]

if guidance and "shortTermUpgradeGuidance" in guidance:

remediation_groups[key]["upgrade_to"] = \

guidance["shortTermUpgradeGuidance"]["versionName"]

return dict(remediation_groups)

# Example output structure:

{

"async@3.2.0": {

"direct_dependency": "async",

"current_version": "3.2.0",

"upgrade_to": "3.2.4",

"vulnerabilities": [

{"vulnerabilityName": "CVE-2021-23337", "severity": "HIGH", ...},

{"vulnerabilityName": "CVE-2020-8203", "severity": "HIGH", ...},

{"vulnerabilityName": "CVE-2023-12345", "severity": "MEDIUM", ...}

],

"transitive_components": {"lodash@4.17.15", "commons-text@1.9"}

},

"spring-boot-starter@2.6.0": {

"direct_dependency": "spring-boot-starter",

"current_version": "2.6.0",

"upgrade_to": "2.7.8",

"vulnerabilities": [...],

"transitive_components": {"log4j-core@2.14.1", "spring-security@5.6.0"}

}

}

Creating Grouped Tickets:

Now your ticket creation logic generates one ticket per remediation action:

def create_remediation_ticket(group_key, group_data):

vuln_count = len(group_data["vulnerabilities"])

severities = [v["severity"] for v in group_data["vulnerabilities"]]

highest_severity = get_highest_severity(severities)

cve_list = ", ".join([v["vulnerabilityName"] for v in group_data["vulnerabilities"]])

transitive_list = ", ".join(group_data["transitive_components"])

return {

"summary": f"Upgrade {group_data['direct_dependency']} to {group_data['upgrade_to']} "

f"({vuln_count} CVEs, {highest_severity})",

"description": f"""

## Action Required

Upgrade **{group_data['direct_dependency']}** from `{group_data['current_version']}`

to `{group_data['upgrade_to']}`.

## Vulnerabilities Resolved ({vuln_count})

{cve_list}

## Affected Transitive Dependencies

This upgrade will update the following transitive dependencies:

{transitive_list}

## Why This Grouping?

These vulnerabilities are in transitive dependencies that you don't directly control.

Upgrading the direct dependency ({group_data['direct_dependency']}) will pull in

fixed versions of the affected libraries.

""",

"priority": severity_to_priority(highest_severity),

"labels": ["security", "dependency-upgrade", "grouped-remediation"]

}

Example Ticket Output:

Summary: Upgrade async to 3.2.4 (3 CVEs, HIGH)

## Action Required

Upgrade **async** from `3.2.0` to `3.2.4`.

## Vulnerabilities Resolved (3)

CVE-2021-23337, CVE-2020-8203, CVE-2023-12345

## Affected Transitive Dependencies

This upgrade will update the following transitive dependencies:

lodash@4.17.15, commons-text@1.9

## Why This Grouping?

These vulnerabilities are in transitive dependencies that you don't directly control.

Upgrading the direct dependency (async) will pull in fixed versions of the

affected libraries.

Why this approach matters:

- Reduced ticket noise: 50 CVEs might become 5 actionable tickets

- Clear ownership: Engineers know exactly what they need to change

- Accurate tracking: One ticket = one PR = one remediation action

- Better metrics: Your “open vulnerabilities” count reflects actual work remaining, not inflated transitive counts

Edge cases to handle — and you will hit all of these:

- Multiple paths: A transitive dependency might be pulled in by multiple direct dependencies. You may need to upgrade several, or pick the “primary” path.

- No upgrade available: Sometimes the direct dependency hasn’t released a version with the fix yet. These need different handling (watch for release, consider alternatives, accept risk).

- Direct dependencies with vulns: These don’t need path traversal — they’re their own remediation action.

The Internal Library Problem Nobody Talks About

BlackDuck excels at identifying open-source components from public registries. But what happens when your organization has internal libraries that BlackDuck knows nothing about? This is one of those problems that doesn’t show up in any vendor demo.

What Goes Wrong

Consider a common enterprise pattern: your platform team maintains an internal UI framework — let’s call it @acme/ui-components — that bundles dozens of open-source libraries (React, styled-components, date-fns, etc.). Product teams consume this internal package rather than depending on the underlying libraries directly.

From BlackDuck’s perspective:

- It sees

@acme/ui-componentsbut doesn’t know what’s inside (it’s not in any public registry) - It can’t trace vulnerabilities in bundled libraries back to your internal package

- Teams using your internal library appear to have no dependencies on the vulnerable components

The risk is real: a critical CVE in date-fns might affect every product using @acme/ui-components, but BlackDuck won’t flag it because it doesn’t know about the bundling relationship. I’ve seen this create massive blind spots in organizations that rely heavily on internal shared libraries.

Strategies That Actually Work

Option 1: Scan Internal Libraries Separately

Create BlackDuck projects for your internal libraries themselves:

BlackDuck Projects:

├── @acme/ui-components (scanned directly)

│ └── Shows: react, styled-components, date-fns, etc.

├── product-a (uses @acme/ui-components)

│ └── Shows: @acme/ui-components (as unknown/custom)

└── product-b (uses @acme/ui-components)

└── Shows: @acme/ui-components (as unknown/custom)

Your automation then needs to propagate findings: when a vulnerability is found in @acme/ui-components, create tickets for all products that consume it.

# Pseudo-code for propagating internal library findings

internal_lib_vulns = get_vulnerabilities("@acme/ui-components")

consumers = service_catalog.get_consumers("@acme/ui-components")

for vuln in internal_lib_vulns:

for consumer in consumers:

create_ticket(

project=consumer,

summary=f"Vulnerability in internal dependency @acme/ui-components",

description=f"Update @acme/ui-components to get fix for {vuln.cve_id}",

# Don't tell them to fix date-fns—they need to update the internal lib

)

Option 2: Use Custom Component Definitions

BlackDuck supports defining custom components. You can register your internal libraries and manually specify their composition:

- Create a custom component in BlackDuck for

@acme/ui-components - Define its “virtual” bill of materials (the open-source libs it bundles)

- When products include your internal library, BlackDuck can trace through to the bundled components

This requires maintenance — every time @acme/ui-components updates its dependencies, you need to update the custom component definition. It doesn’t scale well, but for a handful of critical internal libraries, it works.

What I’d Recommend

Treat internal libraries as first-class projects: They should have their own BlackDuck scans, their own vulnerability tracking, and their own SLAs.

Track the consumer relationship: Your service catalog should know which products depend on which internal libraries. This is essential for impact analysis.

Automate propagation: When an internal library has a vulnerability, automatically notify (or ticket) consuming teams. Don’t wait for them to discover it themselves.

Version your internal libraries rigorously: If

@acme/ui-components@2.3.0has a vulnerability and@2.4.0doesn’t, you need to know exactly which version each product uses.Consider SBOMs: Software Bill of Materials (SBOM) standards like CycloneDX and SPDX are designed for exactly this problem — representing nested component relationships. Generating SBOMs for internal libraries and including them in product SBOMs creates full visibility.

The internal library problem is fundamentally an organizational challenge, not just an API challenge. BlackDuck gives you the scanning capabilities, but you need process and tooling to connect the dots across internal dependencies.

Policy Violations: Not All Rules Deserve Tickets

Policies in BlackDuck are rules that automatically flag components based on configurable criteria. They’re a powerful governance mechanism, but here’s something I had to learn through experience: not all policy violations should be handled the same way.

Types of Policies:

| Policy Type | What It Detects | Example Rules |

|---|---|---|

| Security | Vulnerabilities by severity, age, or exploitability | “Block components with CRITICAL CVEs” |

| License | License types that conflict with your usage | “Flag GPL/AGPL in proprietary code” |

| Operational | Component age, popularity, or maintenance status | “Warn on components not updated in 2+ years” |

| Custom | Organization-specific rules | “Block components from untrusted publishers” |

Why this matters for your workflow:

You might be tempted to create tickets for every policy violation. I was. Here’s why that’s a mistake:

Security policy violations often duplicate the vulnerability data you’re already processing. If you’re creating tickets based on the vulnerabilities endpoint (as I described earlier), creating additional tickets for security policy violations means double-ticketing the same issue. We skip security policy violations in our ticket creation — we handle those through the vulnerability workflow with proper upgrade guidance and grouping.

License policy violations are different. A component with a GPL license isn’t something that shows up in vulnerability data. These require separate tickets with different remediation actions (replace the component, obtain a license, or get legal approval). We do create tickets for these.

Operational policy violations (like “component is deprecated”) are useful signals but may not warrant immediate tickets. These often feed into tech debt tracking rather than security backlogs.

The key insight: query only the policy rules relevant to your ticketing workflow.

# We only create tickets for license-related policies

LICENSE_POLICY_RULES = [

"Copyleft License Restriction",

"Commercial License Required",

"License Conflict Detection"

]

# Security policies are handled via vulnerability workflow - skip them here

SKIP_POLICY_RULES = [

"High Severity Vulnerabilities",

"Critical CVE Detection",

"CISA KEV Components"

]

To get policy violations, you first need the policy rule UUID:

GET /api/policy-rules?limit=1000

Authorization: Bearer {token}

Accept: application/vnd.blackducksoftware.policy-5+json

Example Policy Rules Response:

{

"totalCount": 5,

"items": [

{

"name": "High Severity Vulnerabilities",

"description": "Block components with critical or high severity CVEs",

"enabled": true,

"overridable": true,

"severity": "BLOCKER",

"expression": {

"operator": "AND",

"expressions": [

{

"name": "vuln_severity",

"parameters": {"values": ["CRITICAL", "HIGH"]}

}

]

},

"_meta": {

"href": "https://blackduck.example.com/api/policy-rules/p1p2p3p4-5678-90ab-cdef-ffffffffffff"

}

},

{

"name": "Copyleft License Restriction",

"description": "Flag components with GPL and AGPL licenses",

"enabled": true,

"severity": "MAJOR",

"_meta": {

"href": "https://blackduck.example.com/api/policy-rules/q1q2q3q4-5678-90ab-cdef-gggggggggggg"

}

}

],

"_meta": {

"totalCount": 5,

"links": []

}

}

Then query for violating components:

GET /api/projects/{project-id}/versions/{version-id}/components?filter=bomPolicy:in_violation&filter=policyRuleViolation:PR~{policy-uuid}&limit=1000

Example Policy Violations Response:

{

"totalCount": 3,

"items": [

{

"componentName": "log4j-core",

"componentVersionName": "2.14.1",

"policyStatus": "IN_VIOLATION",

"approvalStatus": "NOT_REVIEWED",

"securityRiskProfile": {

"counts": [

{"countType": "CRITICAL", "count": 1},

{"countType": "HIGH", "count": 0}

]

},

"policyRuleViolations": [

{

"name": "High Severity Vulnerabilities",

"severity": "BLOCKER"

}

],

"_meta": {

"href": "https://blackduck.example.com/api/projects/f47ac10b-58cc-4372-a567-0e02b2c3d479/versions/e8b7d6c5-4a3b-2c1d-0e9f-888888888888/components/l1l2l3l4-5678-90ab-cdef-hhhhhhhhhhhh"

}

}

],

"_meta": {

"totalCount": 3,

"links": []

}

}

The filter syntax uses PR~ prefix for policy rule UUIDs — another undocumented quirk that took me a while to figure out.

Routing different policy types:

def process_policy_violation(violation, policy_rule):

"""Route policy violations to appropriate workflows."""

policy_type = categorize_policy(policy_rule)

if policy_type == "security":

# Don't create ticket - handled via vulnerability workflow

# This avoids double-ticketing the same CVE

log.info(f"Skipping security policy violation - handled via vuln workflow")

return None

elif policy_type == "license":

# Create ticket - this is net-new information

return create_license_violation_ticket(

component=violation["componentName"],

version=violation["componentVersionName"],

license=extract_license_info(violation),

policy_rule=policy_rule["name"],

severity=policy_rule["severity"]

)

elif policy_type == "operational":

# Add to tech debt tracker, not security backlog

return add_to_tech_debt_backlog(violation, policy_rule)

else:

# Unknown policy type - log for review

log.warning(f"Unknown policy type: {policy_rule['name']}")

return None

License compliance is a deep topic with its own nuances — understanding copyleft vs. permissive licenses, license compatibility matrices, and legal review workflows. That’s a whole separate post.

Pagination: Just Follow the Links

BlackDuck uses link-based pagination. Every paginated response includes a _meta object:

{

"items": [...],

"_meta": {

"totalCount": 500,

"links": [

{"rel": "paging-next", "href": "https://...?offset=100"},

{"rel": "paging-previous", "href": "https://...?offset=0"}

]

}

}

The pattern is simple: look for a link with rel: "paging-next". If it exists, follow it. If not, you’ve reached the end. Don’t try to calculate offsets yourself.

next_url = None

for link in response.get("_meta", {}).get("links", []):

if link.get("rel") == "paging-next":

next_url = link.get("href")

break

Error Handling That Saved My Production Runs

Retry with Exponential Backoff

Network hiccups happen, especially on long-running collections. Exponential backoff isn’t optional:

@retry(

stop=stop_after_attempt(3),

wait=wait_exponential(multiplier=1, min=4, max=10)

)

async def _make_request(self, client, url):

# ... request logic

This means: try up to 3 times, waiting 4 seconds, then 8 seconds, then giving up. Simple, effective, and it handles the intermittent 500s that BlackDuck throws under load.

Handling 401 Unauthorized

A 401 during normal operation usually means your bearer token expired. The fix: re-authenticate and retry immediately:

if response.status_code == 401:

# Token might have expired, try to re-authenticate once

if await self._ensure_authenticated(client):

# Retry the request with fresh authentication

response = await client.get(url, headers=self.headers)

Handling 406 Not Acceptable

Sometimes the documented Accept header doesn’t work. I already mentioned this, but it’s worth showing the fallback pattern:

if response.status_code == 406:

# Retry without Accept header (only Authorization)

retry_headers = {"Authorization": self.headers["Authorization"]}

response = await client.get(url, headers=retry_headers)

Performance: Because Thousands of Projects Don’t Process Themselves

Processing thousands of projects with millions of components requires careful performance tuning. Here’s what I landed on after several iterations:

Connection Pooling

connection_limits = httpx.Limits(

max_connections=20,

max_keepalive_connections=10,

)

Parallel Processing

Process multiple projects and components concurrently:

# Process 5 projects in parallel

batch_size = 5

for batch in batched(projects, batch_size):

await asyncio.gather(*[process_project(p) for p in batch])

I started with a batch size of 10 and quickly learned that BlackDuck’s rate limiting doesn’t appreciate it. 5 is the sweet spot for our instance.

Timeouts

Set both global and per-project timeouts to prevent runaway collections:

# Global: 8 hours total

# Per-project: 30 minutes max

await asyncio.wait_for(

process_project(project),

timeout=30 * 60 # seconds

)

Incremental Writes

Don’t wait until the end to save data. Write after each project completes:

# After processing each project

await write_project_batch(project_data, db_connection)

I learned this one the painful way — a 6-hour collection that crashed at hour 5 with nothing saved. Now I write incrementally. Always.

Context Tracking: Embed Parent IDs or Regret It Later

When storing hierarchical data, always embed parent IDs for easier querying later:

{

"vulnerabilityName": "CVE-2025-1234",

"severity": "HIGH",

"_context": {

"project_id": "aaaaaaaa-1111-2222-3333-bbbbbbbbbbbb",

"version_id": "eeeeeeee-7777-8888-9999-ffffffffffff",

"component_id": "iiiiiiii-1111-2222-3333-jjjjjjjjjjjj",

"component_version_id": "kkkkkkkk-4444-5555-6666-llllllllllll"

}

}

This _context pattern enables joining across tables without complex URL parsing. I wish I’d done this from the start instead of retrofitting it three months in.

Extracting IDs from URLs

BlackDuck URLs follow predictable patterns. The ID is always the last path segment:

def _extract_id_from_url(url: str) -> str:

parts = url.split("/")

return parts[-1] if parts else ""

Examples:

/api/projects/abc-123->abc-123/api/components/xyz-789/versions/ver-456->ver-456

The Complete Collection Flow

Putting it all together, here’s the data flow I ended up with:

- Authenticate -> Get bearer token

- Get project URLs -> From your asset inventory

- For each project:

- Fetch project details

- Fetch project hierarchy (if projectGroup exists)

- Fetch all versions

- Filter to relevant versions (e.g., “main”, “master”)

- Find the latest version by timestamp

- For the latest version:

- Fetch all components (with pagination)

- For each component:

- Extract origins

- Fetch vulnerabilities (via _meta.links)

- For each origin: Fetch upgrade guidance (via _meta.links)

- Fetch dependency paths

- If policy rules configured:

- Look up policy rule UUIDs

- Fetch violating components

It looks clean in a list. In practice, it’s thousands of API calls with retry logic, token refresh, pagination, and error handling at every step.

Version Naming Is Chaos, and That’s an Organizational Problem

In enterprise organizations, you’ll quickly discover that version naming is chaos. Engineering organizations with dozens or hundreds of teams each make independent decisions about CI/CD configuration, Git workflows, and BlackDuck integration. The result?

- Team A uses

main - Team B uses

develop - Team C uses

master(legacy) - Team D uses semantic versioning like

1.2.3 - Team E uses release branches like

release/2025-Q1

Why This Breaks Your Collector

If you’re a product security team trying to create actionable findings for engineers, you need to know which version represents “current production code.” Without that clarity, several problems emerge:

Duplicate findings: The same vulnerability appears in

main,develop, and1.2.3— creating three tickets for what engineers experience as one issue.Stale findings: You track vulnerabilities in

develop, but the team shipped fromrelease/2025-Q1. Your findings don’t match what’s actually deployed.Conflicting remediation tracking: A vulnerability is marked “fixed” in one version but still open in another. Did the team actually remediate it?

Noise in metrics: Your vulnerability counts are inflated by version proliferation, making it hard to understand true risk posture.

Shield Engineers From Your Tools

Many product security teams — mine included — aim to shield engineers from the underlying tools. The goal: engineers receive tickets in their backlog with all the context they need — component name, vulnerability details, remediation guidance — without ever logging into BlackDuck.

This philosophy breaks down when version inconsistency creeps in. If your automation doesn’t know which version to pull findings from, you can’t generate clean, actionable tickets. Engineers end up confused by duplicates or findings that don’t match their reality. And once they lose trust in your tickets, getting it back is hard.

What We Tried

Option 1: Mandate a Standard Version Name

The simplest approach: require all teams to scan a version named main (or whatever you choose). This works well for organizations that can enforce standards.

# In your collector configuration

BLACKDUCK_VERSION_NAMES=main,master

The downside: some teams legitimately need different workflows, and forcing uniformity creates friction. This is where we landed — it got us most of the way there and was the fastest to implement.

Option 2: Track Version Names in a Service Catalog

Maintain a configuration management database (CMDB) or service catalog that maps each project to its “canonical” version name:

| Project | Canonical Version | Team | Notes |

|---|---|---|---|

| payment-service | main | Platform | Standard workflow |

| legacy-api | master | Legacy | Migrating to main in Q2 |

| mobile-app | release/* | Mobile | Uses release branches |

Your collector queries this catalog to determine which version to process for each project. This adds operational overhead but provides flexibility. This is where we want to go next — tying version selection into our service catalog so teams can own their own configuration without us hardcoding assumptions.

Option 3: Use “Latest by Timestamp” with Filtering

Another approach: collect all versions that match an allowed list, then select the one with the most recent settingUpdatedAt timestamp. This handles teams that use different names while still selecting a single authoritative version per project.

# Filter to allowed version names

allowed = {"main", "master", "develop"}

# Then find the latest among filtered versions

latest = max(versions, key=lambda v: v["settingUpdatedAt"])

When Multiple Versions Are Actually Legitimate

Not all multi-version scenarios are problems. Some are legitimate business requirements:

Shipped Software: If you distribute software that customers install and run in their own environments, you need to track the exact vulnerability profile of each release. When a customer reports they’re running version 2.4.1, you need to know exactly which CVEs affect that release — not just what’s in your current main branch.

Long-Term Support (LTS): Organizations maintaining multiple supported versions (e.g., v3.x and v4.x simultaneously) need findings for each. A critical CVE might be exploitable in v3.x but already patched in v4.x.

Regulatory Requirements: Some compliance frameworks require demonstrating the exact composition of released software. You may need to preserve and query historical version data.

For these use cases, the solution isn’t to limit versions — it’s to clearly segment findings by release and ensure your ticketing system can handle this complexity without creating noise in active backlogs.

Building Rigor Into Your Process

The BlackDuck API gives you capabilities, but you need process to use them effectively:

Document your version strategy: What version name(s) are valid for each project type? Where is this tracked?

Automate enforcement: Your CI/CD integration should fail or warn if a project doesn’t have the expected version configured.

Audit regularly: Which projects have unexpected version proliferation? Are teams following the documented standard?

Design your ticketing integration carefully: How do findings map to backlogs? What happens when a vulnerability exists in multiple versions?

Plan for exceptions: Some teams will have legitimate needs for non-standard workflows. Have a process for documenting and handling these.

The API doesn’t solve organizational problems — but understanding its version model helps you design processes that work with it rather than fighting against it.

What I Wish I’d Known From Day One

Accept headers matter but aren’t always consistent. Build in fallback logic from the start.

Bearer tokens expire. Refresh proactively, not reactively. A crashed 3-hour run teaches you this once.

Follow the links. Don’t hardcode URLs — use

_meta.linksto discover related resources. The URL patterns aren’t even consistent across endpoints.Pagination is everywhere. Always check for

paging-next. I’ve seen endpoints return partial data silently when you don’t paginate.Plan for scale from day one. Connection pooling, parallel processing, and incremental writes aren’t optional when you’re processing thousands of projects.

Expect inconsistencies. The API evolved over time — URL patterns like

/project/vs/projects/reflect this history. Don’t assume uniformity.Separate actionable from unactionable. This is the single biggest thing you can do to build trust with engineering teams.

Group by remediation, not by CVE. Engineers don’t fix vulnerabilities — they upgrade dependencies. Model your tickets accordingly.

The BlackDuck API is powerful once you understand its patterns. But the real challenge isn’t the API — it’s building the organizational processes around it that turn raw vulnerability data into work engineers can actually do. Hopefully this saves you the months of trial and error my team went through building our collector.

Built from real-world experience processing hundreds of projects with millions of components. The code patterns shown here are simplified from our production collector — your implementation details will vary, but the principles hold.